AI Cybersecurity

and Resilience

TrustThink’s Enterprise Toolset

and Consulting Service

A trusted and effective approach to testing the robustness of artificial intelligence models and the data sets that train them

TrustThink provides a full suite of services to address evolving AI security requirements, with expertise across a wide range of models and data sets. We conduct comprehensive robustness testing to determine whether systems are hardened and resilient against potential threats.

By evaluating and analyzing these vulnerabilities, we provide organizations with confidence and knowledge that their AI solutions are effective, safeguarded and reliable. With a focus on current risks and emerging challenges, we deliver the assurance that your AI models are prepared to operate safely in real-world environment.

Features & Benefits

Perception AI Models

Generative AI

LLM Applications

Red Teaming Agentic AI Applications

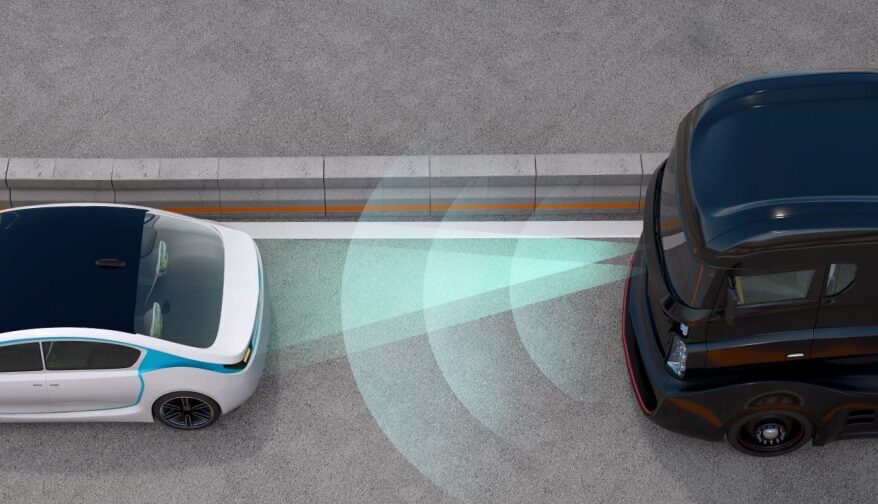

Perception AI Models

Perception models, such as those used for object detection, segmentation, and image classification, are increasingly deployed in safety-critical sectors where robustness and reliability are paramount.

To meet these demands, TrustThink has developed AssuredAI, a specialized testing and evaluation platform that provides a modular red-teaming framework tailored to image-based AI models. AssuredAI delivers scenario-based testing to uncover vulnerabilities and performance gaps. The platform validates the following:

Training Dataset Quality

Identifying weaknesses or biases that could impact performance

Model Robustness

Evaluating defenses against a customized adversarial dataset generated by AssuredAI

Effectiveness Under Drift

Testing model stability against environmental changes and data drift that occur in real-world deployment

Additionally, we offer domain-specific testing scenarios to ensure that evaluations are aligned with each model’s operational context.

Following every evaluation, TrustThink provides a detailed threat model tailored to the system under review with actionable mitigation recommendations based on the completed evaluation. Mitigations are derived from recognized industry standards and best practices, including MITRE ATLAS, NIST AI RMF, CSA AICM, and ISO/IEC standards. This full evaluation package is designed to support feedback into development workflows.

Generative AI

LLMs and agentic AI systems are increasingly embedded in critical workflows, but they introduce novel risks that traditional security testing cannot fully address.

TrustThink addresses these concerns by:

Applying a structured evaluation process to ensure your generative AI applications are secure and resilient.

Crafting personalized prompts which, when introduced to the application, determine if it is vulnerable to the threats outlined by leading generative AI security guidance.

Generating a uniquely customized, dynamic penetration testing plan to fit your model’s unique risks and needs.

Red Teaming LLM Applications

For LLM-powered applications, our penetration testing focuses on vulnerabilities specific to these models with techniques mapped directly from the OWASP Gen AI Security Project’s Top 10 for LLM Applications. Examples include:

Prompt Injection Attacks

Attempts to override system instructions, leading to undesired behaviors

Jailbreaks

Adversarial prompts engineered to bypass the model’s guard rails to generate malicious responses

Data Leakage

Probing for exposure of sensitive training data, embeddings, or confidential context

Misinformation Injection

Inject false or error filled information which is then propagated to the LLM’s output

Red Teaming Agentic AI Applications

Agentic applications utilize LLMs with autonomy to perform multi-step reasoning, use tools, and interact with external systems. The autonomy these Agentic AI are given leads to a large increase in attack vectors.

To meet this challenge, we extend our generative AI red teaming strategies to cover adversarial scenarios specific to these systems based on the OWASP Gen AI Security Project’s Top 10 for Agentic AI and MAESTRO. Examples include:

Tool Misuse

Manipulating an agent to abuse external tools

Goal Hijacking

Attempting to redirect the agent’s long-term objective

Memory & Context Poisoning

Corrupting agent memory or context to distort reasoning

Cascading Failures

Propagating hallucinations or misinformation through the agent, causing compounded failures

TrustThink’s custom red teaming approach ensures that generative AI applications are rigorously evaluated against the latest adversarial threats and aligned with predominant frameworks. We provide quantitative reports and detailed, actionable recommendations for strengthening guardrails to mitigate identified threats.

These recommendations are designed to integrate seamlessly into your development and deployment pipelines, resulting in a hardened generative AI application that is resilient against adversarial threats and aligned with industry’s best practices for secure deployment.

Explore Additional Services

AI Capability Maturity Model (CMM)

ITS Cybersecurity

Medical Device Cybersecurity & FDA Compliance

Cryptographic Key Management Systems

Autonomous & Robotic Systems Cybersecurity

Research, Development, & Prototyping

Learn more about TrustThink’s AI

Evaluation services today

Connect with our team to discuss how we can help secure your AI systems against adversarial threats